Facial recognition technology (FRT) has evolved rapidly over the past decade and is now an integral part of various industries worldwide. In 2025, it is a cornerstone of modern security systems, retail solutions, healthcare advancements, and more. While FRT promises improved security and convenience, it also raises crucial concerns regarding privacy, ethics, and data security. In this article, we will explore how facial recognition technology works, its diverse applications, technological advancements, privacy issues, and the global regulatory landscape.

What is Facial Recognition Technology?

Facial recognition technology involves identifying or verifying individuals by analyzing the unique features of their faces. It uses biometric data, comparing facial patterns with stored images to determine identity. There are two main types of FRT:

- 1:1 Verification: This process matches a live image with a stored image in a database to confirm the identity of an individual.

- 1:N Identification: The system compares a live image against a larger database to identify an individual by finding a match.

In 2025, the accuracy of facial recognition systems has dramatically improved, with many systems now achieving accuracy rates exceeding 99.5%, and some even reaching 99.97% under optimal conditions.

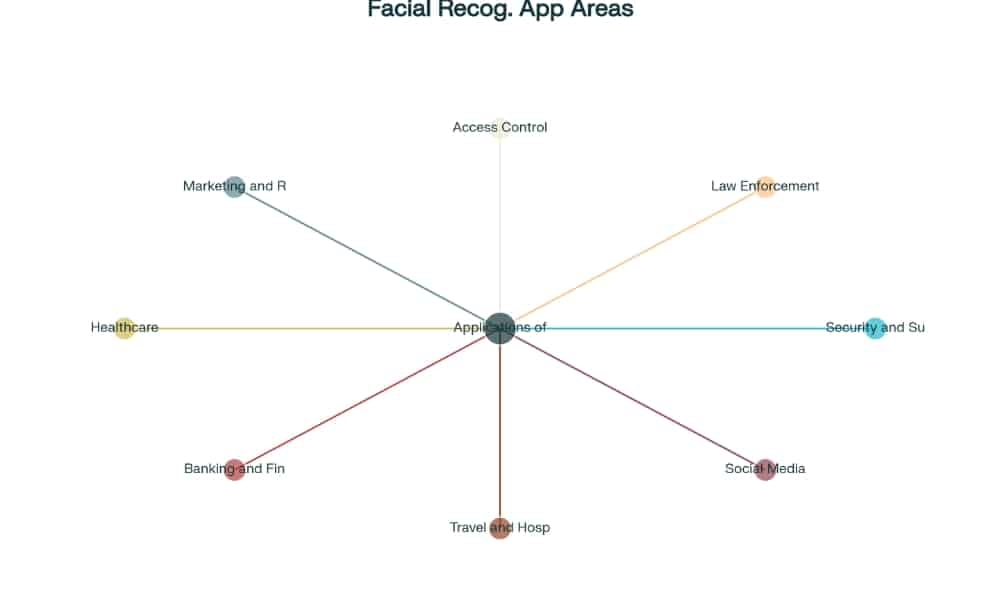

Applications of Facial Recognition Technology in 2025

Facial recognition technology is now used across various sectors, from security to customer service, and its applications continue to grow. Let’s explore some of its key uses in 2025.

1. Security and Surveillance

FRT is widely used for enhancing security, especially in public spaces. Its integration into surveillance systems allows cities to monitor individuals in real-time and respond to security threats more efficiently. For example:

- Urban Surveillance: In cities like Melbourne, AI-powered CCTV cameras with facial recognition technology are deployed to detect and track criminals.

- Event Security: At large events like concerts or sports games, FRT is used to monitor crowds, identify potential threats, and prevent unauthorized access.

2. Border Control and Immigration

Airports and border agencies have integrated facial recognition systems to enhance security and streamline operations:

- U.S. Customs and Border Protection has implemented facial recognition systems to photograph and verify every individual in vehicles crossing borders.

- European Union uses biometric facial recognition data to facilitate quicker and more secure border crossings.

3. Financial Services

Banks and other financial institutions have adopted facial recognition technology to improve security and prevent fraud:

- Secure Transactions: Many ATMs now use facial recognition to allow customers to withdraw money without a card.

- Fraud Prevention: FRT helps detect fraudulent activities by verifying customers during online banking and financial transactions.

4. Retail and Customer Experience

Facial recognition technology is revolutionizing retail by improving the customer experience and boosting security:

- Personalized Shopping: Retailers use FRT to recognize repeat customers and offer personalized experiences, such as tailored promotions and discounts.

- Loss Prevention: In stores, FRT is used to identify potential shoplifters by scanning facial features in real-time.

5. Healthcare

In the healthcare sector, FRT is being utilized for improving patient care and security:

- Patient Identification: Hospitals use FRT to ensure that patient records are accurately matched with the correct individual.

- Access Control: FRT helps restrict access to sensitive areas of healthcare facilities, ensuring that only authorized personnel can enter secure locations.

Technological Advancements in Facial Recognition Technology

By 2025, advancements in facial recognition technology have led to faster, more reliable, and secure systems. Some of the key innovations include:

1. AI and Machine Learning

AI and machine learning have made facial recognition systems more efficient and adaptable. These technologies enable systems to learn from new data, improve accuracy, and recognize faces in various lighting conditions or from different angles.

2. Liveness Detection

Liveness detection technology has significantly improved the security of facial recognition systems by preventing spoofing attempts. It can detect whether the subject is a live person or a photo/video, making it much harder for criminals to deceive the system.

3. Edge Computing

Edge computing is revolutionizing facial recognition by processing data locally on the device, reducing latency, and increasing the speed of identification. This technology also ensures that sensitive data does not need to be transmitted to central servers, offering enhanced security and privacy protection.

Ethical and Privacy Considerations

As facial recognition technology becomes more widespread, several ethical and privacy concerns have emerged:

1. Privacy Invasion

The ability of governments, businesses, and other organizations to track individuals in real-time raises privacy concerns. Many worry about the potential for mass surveillance without consent, leading to a loss of personal freedom.

2. Bias and Accuracy

Research has shown that facial recognition technology can exhibit biases, with lower accuracy rates for women and people of color. This has raised concerns about the fairness and equity of FRT in various applications, especially in law enforcement and hiring practices.

3. Regulatory Compliance

With the growing use of FRT, governments worldwide are working on laws to regulate its use and protect individuals’ privacy. The EU’s General Data Protection Regulation (GDPR) and the AI Act are examples of frameworks that govern biometric data collection and usage.

Global Regulatory Landscape

Governments are taking various steps to regulate facial recognition technology and protect privacy. Here are some key regulations:

1. China

In China, facial recognition technology is widely used for various purposes, including law enforcement and surveillance. However, new rules effective in June 2025 will require explicit consent for data collection and restrict the use of FRT in certain sensitive locations.

2. United States

In the U.S., different states have enacted their own laws governing the use of facial recognition technology. There is also ongoing debate at the federal level regarding the regulation of its use, particularly in public spaces and by law enforcement.

3. European Union

The European Union is at the forefront of regulating facial recognition technology. Under the GDPR and the AI Act, the EU has set strict guidelines for the collection and use of biometric data, ensuring that individuals’ privacy rights are protected.

Market Outlook for Facial Recognition Technology

The facial recognition market is experiencing rapid growth, driven by increased demand for security solutions and advancements in artificial intelligence. By 2025, the market is projected to reach $7.92 billion, up from $6.94 billion in 2024.

Conclusion

Facial recognition technology in 2025 is a powerful tool that enhances security, improves customer experiences, and streamlines operations across various sectors. However, its widespread use comes with significant ethical and privacy challenges that need to be addressed. As FRT continues to evolve, it will be crucial for governments, businesses, and society to balance its benefits with responsible and ethical use.

Recent Developments

- Meta’s AI Glasses: Meta is developing smart glasses with facial recognition capabilities to assist users in daily tasks.

- Melbourne’s AI Surveillance: The City of Melbourne is considering integrating AI and facial recognition into its CCTV systems to improve public safety.

- U.S. Border Enhancements: U.S. Customs and Border Protection is seeking proposals to develop a real-time facial recognition system for vehicle occupants at border crossings.

FAQs about Facial Recognition Technology

How accurate is facial recognition technology in 2025?

- Modern systems achieve accuracy rates exceeding 99.5%, with some reaching up to 99.97% under optimal conditions.

What are the primary applications of facial recognition?

- Key applications include security and surveillance, border control, financial services, retail, and healthcare.

What ethical concerns are associated with facial recognition?

- Concerns include privacy invasion, potential bias, and the need for stringent regulatory compliance.

How are governments regulating facial recognition technology?

- Regulations vary by country, with measures focusing on consent, data protection, and usage restrictions in sensitive areas.

What is the market outlook for facial recognition technology?

- The market is projected to grow significantly, driven by advancements in AI and increased adoption across various sectors.